Understanding buzzdetect model metrics

Overview

There are a few pieces of jargon we often use when referring to the performance of our buzzdetect models. Fortunately, as models go, the tests of our models are fairly straightforward. In this post, we’ll do a deep dive on our terminology and look at some of the behaviors and implications of the model metrics.

So far, we treat our models as simple binary predictors. Is there buzzing in the frame or not? The models can’t (yet) identify the insect producing the buzz and, while they’ve technically been trained to detect other events like rain and passing cars, we only include those events in the training dataset to improve performance on bees and haven’t tested model performance on non-buzz events.

Treating results as binary trials, there are three resulting measures of model performance that we refer to as the model metrics: sensitivity, precision, and false positive rate. These interrelated values are themselves functions of the threshold applied to the activation value for the buzz neuron of the model. Let’s define all five terms.

TL;DR

Sensitivity is the probability that we get a detection given that a frame contains a buzz.

Precision is the probability that there is a buzz given that there was a detection.

False positive rate is the probability that a non-buzz frame produces a detection.

The threshold is the activation value above which we call a detection.

The activation value is the score given by the output neuron trained to detect buzzes.

Activation and Thresholds

Every row in a buzzdetect result file represents one frame, one discrete portion of audio that was fed into the model. Every column (except the “start” column) represents a different type of audio event the model has been trained to classify, with values corresponding to those neurons’ activations: the numerical output of the neural network for that neuron. The model is a huge pile of math; the activations are the outputs of that pile given the input audio. The activation value is a bit abstract. We don’t make any modification of the activations, though other tools do (e.g. Wood and Kahl 2024). We could bound the activations between zero and one, but this would only rescale the values, it wouldn’t really change how they’re used or what they mean. We could try to map the activations to a probability (and we do, later in this post), but this mapping is context-dependent. We don’t want to imply that a given activation value implies a fixed chance of a buzz, so buzzdetect outputs the unmodified activation values.

To turn activations into detections, the user must apply a threshold. This is simply a number that sets the lower limit for a detection. When activation > threshold, a detection is called. The choice of a threshold depends on the signal-to-noise ratio of the underlying data. Sites with a high amount of pollinator activity will show obvious trends even under very strict thresholds. Thresholding buzzdetect results is handled by our buzzr package.

The metrics: sensitivity, precision, and false positive rate

Applying a threshold turns our continuous activations into discrete detections. The frame could be in any one of four states: true positive (a detection is called and a buzz really is present), false positive (a detection is called, but there was no buzz), true negative (no detection, no buzz), or false negative (a buzz was missed). This is where our metrics come in:

False positive rate is simply the probability that a non-buzz frame produces a detection. Given a lot of non-buzz audio, how often does the model think there’s a buzz?

Sensitivity is the probability that we get a detection given that a frame contains a buzz. Given a lot of buzzing audio, how often does the model correctly pick up the buzzing?

Precision is a little less straightforward. It’s the probability that there really is a buzz in the audio given that we see a detection. Essentially, it is a signal:noise ratio. This might sound similar to sensitivity, but it isn’t. Precision is a joint function of the other two metrics and of the background rate of buzzing in the audio.

It’s a function of false positive rate because the more likely we are to have false positives, the less confident we are that there will really be a buzz when we see a detection.

It’s a function of sensitivity because the more likely we are to pick up on true buzzes, the more confident we can be in our detections.

It’s a function of the background buzzing rate, because more buzzing means more chances for true positives and fewer chances for false positives. Imagine a model that inherently has a 5% false positive rate and 50% sensitivity. If we applied that model to 100 frames where 80 contain buzzes and 20 do not, we would expect to see 40 true positive frames and 1 false positive frame, a precision of 40/41 = 98%. If we applied the same model to 20 buzz frames and 80 non-buzz frames, we would expect to see 10 true positive frames and 4 false positive frames, a precision of 10/14 = 71%.

In a way, precision is the metric to be concerned about. Who cares how sensitive you are if you’re getting a lot of noise with that signal? What does it matter how low the false positive rate is if you can’t detect any bees in the first place? Or, in the other direction, maybe you can tolerate a lot more false positives because you would get so many more true positives! For this reason, we shoot for a threshold that corresponds to 95% precision.

Metrics across the thresholds

Let’s graph how these metrics relate to one another as we move across threshold values. We’ll use the data from our tests on model_general_v3. These data are available in the Zenodo repository for the buzzdetect manuscript. Here, we’ll plot the entire range of activations seen during model testing. This plot is interactive, try moving your cursor across it to see the values change.

Precision can’t be lower than ~17%, because that was the background rate of buzzing in our audio. If you just call everything a buzz, you’ll still be right 17% of the time.

- Note that the test audio were only from daytime hours. The precision would be lower if we had included all of the nighttime audio.

Precision hits nearly 100% at a threshold of -0.7. There’s no point in cranking your threshold any higher.

Even a small increase in false positive rate can tank precision. 0.2% → 0.7% in false positive rate might not seem like much, but it drops precision from 99% to 90%. There’s a lot more non-buzz audio than there is buzz audio - false positives really matter!

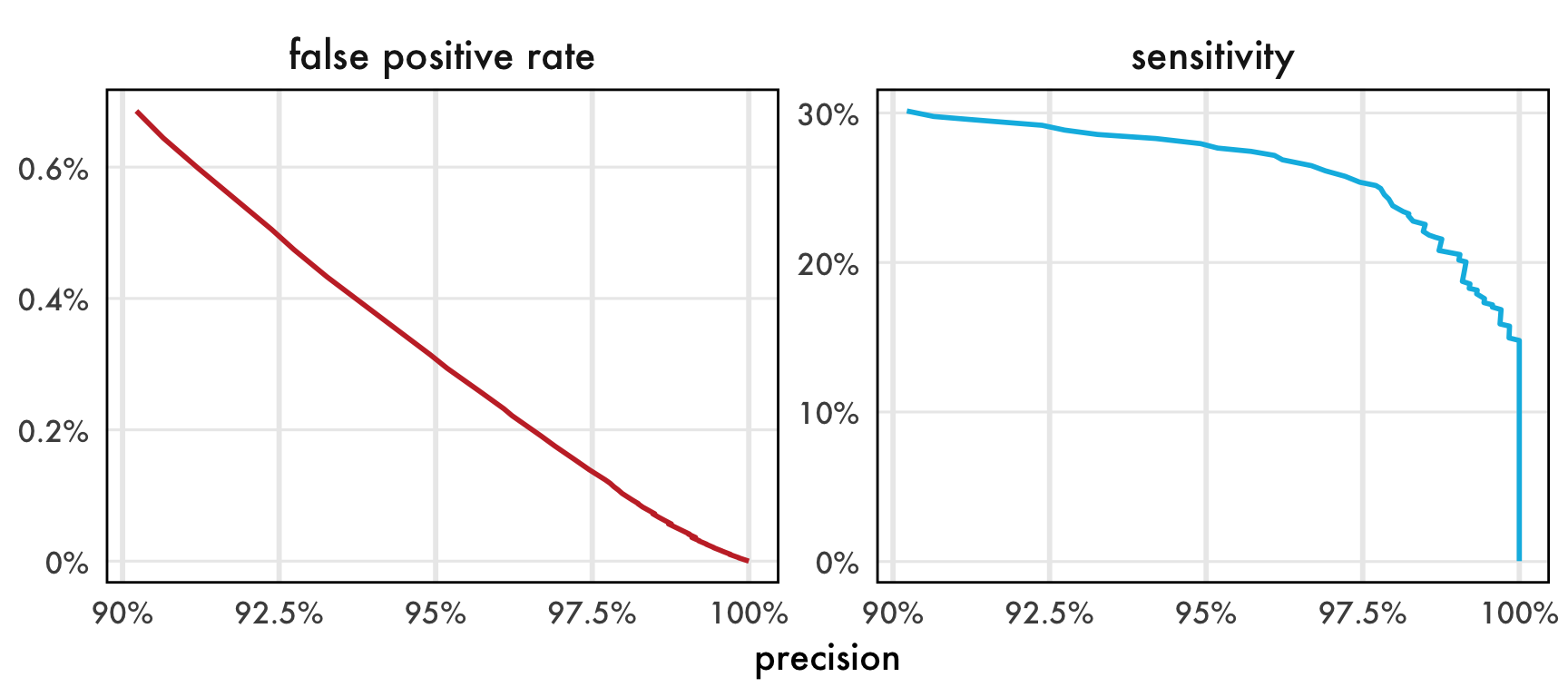

This graph covers all observed activation values of the buzz neuron. That’s a ridiculous extent when we’re suggesting using thresholds at 95% or higher precision. Let’s zoom in and graph things differently; let’s look at the trade-off between our reasonable range of precision values and our other two metrics.

Trading off metrics

The maximum useful sensitivity is about 30%. That might sound low, and sure, more is better, but there are a few considerations:

30% applied across every second of every day is a lot of detections. Hundreds to thousands!

In the paper, we dropped sensitivity all the way to 1% and saw qualitatively identical daily trends in the plants we recorded in.

What’s the sensitivity of a sweep net, anyways?

We can see that sensitivity stays relatively constant until it hits a wall shortly after 97.5%.

- This trend is actually expected because, in the activation space, the distance between 97.5% and 100% precision is much larger than that from 90% to 97.5%.

False positive rate is very well behaved as precision increases. This may just be because there are a lot more frames of non-buzz audio from which to calculate FPR.